ADVANTAGE AI translates global standards into implementable assurance practices. We provide rigorous methodologies that identify blind spots standard frameworks miss, delivering evidence regulators now expect.

AI potential into

AI performance

Building Confidence in AI Through Operationalised Rigour

GLOBAL BOARD ASSURANCE

Ensuring your AI investments don't become your AI burden.

Standards Without Practice: The Gap in AI Governance

Customer Requirements and Regulations tell us "What" is required.

ADVANTAGE AI services show you "How" to meet them.

Amplification of the Average

AI models are often trained to maximise statistical probability. They learn to predict the most likely pattern. While this makes them excellent at handling common, high-volume situations, it means that rare but critical scenarios can be systematically underweighted or ignored.

Automation Complacency

When teams see fluent, authoritative-looking AI analysis, research shows adherence rates significantly increase, even when the AI is wrong. This automation bias can reduce the critical vigilance needed to detect failure, amplifying errors precisely when independent judgment is most vital.

AI models are often trained to maximise statistical probability. They learn to predict the most likely pattern. While this makes them excellent at handling common, high-volume situations, it means that rare but critical scenarios can be systematically underweighted or ignored.

Personal Accountability

The principles-based approach in the UK relies on existing regulators. For organisations operating in the Financial Services sector, the SM&CR regulations of the Financial Conduct Authority hold the executives and senior managers personally accountable for any AI systems that fail.

AI models are often trained to maximise statistical probability. They learn to predict the most likely pattern. While this makes them excellent at handling common, high-volume situations, it means that rare but critical scenarios can be systematically underweighted or ignored.

Pre-Commitment Capital Discipline

AI Investment Assurance

AI investments are often reported as failures not because models fail, but because the organisation wasn’t ready. AI Capital Governance is a structured framework that evaluates whether proposed or existing AI investments are economically sound, risk-adjusted, operationally sustainable and resilient - before capital is committed at scale.

Learn more...

Operational Fragility Containment

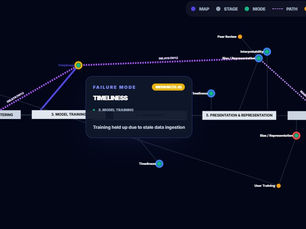

AI Failure Path Mapping

The risk profile shifts as companies integrate AI. It is no longer just about external attacks; it is about the fragility of internal complexity. It is the difference between an AI model remaining mathematically ‘accurate’ while processing corrupted data that leads to poor operational and executive decisions. AI-FPM reveals how seemingly minor failures can impact critical operational and executive decisions.

Learn more...

Institutional Intelligence Continuity

AI Cognitive Resilience

As AI systems become embedded in core workflows, organisations are externalising institutional knowledge into AI environments. AI Cognitive Resilience extends proven resilience disciplines into the domain of organisational cognition. Understand exactly what institutional knowledge lives inside your AI environments, where your cognitive dependencies are concentrated, and what it would cost - in time, money, and performance - to recreate it if lost.

Learn more...

Pre-Commitment Capital Discipline

AI Investment Assurance

AI investments are often reported as failures not because models fail, but because the organisation wasn’t ready. AI Capital Governance is a structured framework that evaluates whether proposed or existing AI investments are economically sound, risk-adjusted, operationally sustainable and resilient - before capital is committed at scale.

Learn more...

Operational Fragility Containment

AI Failure Path Mapping

The risk profile shifts as companies integrate AI. It is no longer just about external attacks; it is about the fragility of internal complexity. It is the difference between an AI model remaining mathematically ‘accurate’ while processing corrupted data that leads to poor operational and executive decisions. AI-FPM reveals how seemingly minor failures can impact critical operational and executive decisions.

Learn more...

Institutional Intelligence Continuity

AI Cognitive Resilience

As AI systems become embedded in core workflows, organisations are externalising institutional knowledge into AI environments. AI Cognitive Resilience extends proven resilience disciplines into the domain of organisational cognition. Understand exactly what institutional knowledge lives inside your AI environments, where your cognitive dependencies are concentrated, and what it would cost - in time, money, and performance - to recreate it if lost.

Learn more...

Pre-Commitment Capital Discipline

AI Investment Assurance

AI investments are often reported as failures not because models fail, but because the organisation wasn’t ready. AI Capital Governance is a structured framework that evaluates whether proposed or existing AI investments are economically sound, risk-adjusted, operationally sustainable and resilient - before capital is committed at scale.

Learn more...

Operational Fragility Containment

AI Failure Path Mapping

The risk profile shifts as companies integrate AI. It is no longer just about external attacks; it is about the fragility of internal complexity. It is the difference between an AI model remaining mathematically ‘accurate’ while processing corrupted data that leads to poor operational and executive decisions. AI-FPM reveals how seemingly minor failures can impact critical operational and executive decisions.

Learn more...

Institutional Intelligence Continuity

AI Cognitive Resilience

As AI systems become embedded in core workflows, organisations are externalising institutional knowledge into AI environments. AI Cognitive Resilience extends proven resilience disciplines into the domain of organisational cognition. Understand exactly what institutional knowledge lives inside your AI environments, where your cognitive dependencies are concentrated, and what it would cost - in time, money, and performance - to recreate it if lost.

Learn more...

Assurance Outcomes

ADVANTAGE AI conducts independent assurance using three rigorous methodologies, each addressing a critical blind spot in AI governance frameworks.

The evidence-based evaluations provide:

AI INVESTMENT ASSURANCE

Answers: "Can we invest in AI with confidence - and how do we prove that?"

✓ Identification of untested critical investment assumptions

✓ Governance maturity baseline and progression

✓ Investment decision framework with explicit gates

✓ Capital preservation through portfolio discipline

AI FAILURE PATH MAPPING

Answers: Where can AI fail and how do we detect, prevent and contain it?

✓ How component failures cascade into regulatory breaches

✓ Where to deploy circuit breakers with greatest impact

✓ Action plans for implementing remedial actions

✓ Incident playbooks for operational resilience

AI COGNITIVE RESILIENCE

Answers: What intelligence are we externalising into AI and can we survive without it?

✓ Where institutional knowledge has migrated into AI systems

✓ Cognitive dependency concentration and single points of failure

✓ Maximum tolerable cognitive downtime and loss

✓ Board-reportable intelligence risk measures

Why This Matters

For executive leaders, principles alone are insufficient.

Strategic Experimentation

The prevailing metric for AI success - production deployment - is a strategic error.

In a regulated environment, a "failed" PoC can often be successful audit of risk.

Organisations with the highest returns don't run more PoCs. They run better PoCs.

The difference is decision quality.

[Read the Full Insight →]

SM&CR Reality

Senior managers in regulated firms face personal accountability.

The question isn't...

"Is our AI governance good?"

The question is...

"Can we prove our governance is rigorous?"

The ADVANTAGE AI methodologies provide that proof.

Regulatory Expectations

Regulators are moving from principle-based frameworks to evidence-based expectations.

They want to see:

- How you identified risks

- How you managed knowledge dependencies

- How you made investment decisions

Standards tell you what to do.

We show you how to prove you did it.

THE AI-FPM SERVICE

AI Failure Path Mapping

AI-FPM is our independent, board-level assurance service that rapidly exposes the hidden weaknesses in AI-enabled systems. We identify how failures can silently propagate into customer harm, regulatory breaches or financial loss.

>

Board-level assurance for AI decisions

>

Rapid exposure of propagation paths

>

Regulatory and customer impact analysis

>

Prioritised remediation roadmap

Learn how AI-FPM protects your board

-

We don't sell you any AI products or platforms.

-

We don't try to convince you AI is a solution for all your problems.

Loss of Customer Confidence and Trust

combined with Regulator Penalties can be Significant

Using AI can be easy. Safely implementing AI in the workplace can be complex.

Implementing AI may be new but it faces many of the same challenges we’ve been managing in regulated enterprise environments for years.

Losing customer confidence and trust because of a lack of robust governance can be a catastrophe and regulator penalties can be significant.

"Only 25% of any AI implementation is about the Technology."

Microsoft AI Tour London

October 2025.

Our independent review provides you with the clarity and reassurance for managing your management, technical and functional risks.

AI Insights for Informed Decision-Making

Stay ahead of the curve with our latest blog posts

Company Founder

PETER GROSS

CITP, MBCS

40 years at the frontier of emerging technology. Regulation. Risk. Investment. Execution.

Peter specialises in preventing AI investment regret - ensuring AI roadmaps are commercially defensible, operationally viable and governance-calibrated before scale commitments are made.

Creator of AI-FPM™, a structured methodology identifying execution, governance and operational failure points before investment decisions are locked in.

He operates in environments where cost of mis-execution is material:

-

Bank of England

-

London Stock Exchange

-

Royal Bank of Scotland

-

Deutsche Bank

-

Aviva

-

MS Amlin

-

AXA XL